What is Agentic AI?

Quick Insights:

Agentic AI is built for outcomes, not just outputs. It can understand a goal, break it into steps, use tools, and act with minimal supervision. It typically works through a repeating loop of perception, reasoning, planning, action, memory, and learning, which helps it adapt instead of stopping after one answer.

AI is not just answering anymore. It is starting to act. That one shift changes everything. A 2025 survey of 1,000 IT and business executives found that 51% of organizations had already deployed AI agents and another 35% planned to do so within two years. Another forecast says that by 2028, 33% of enterprise software applications will include agentic AI, while 15% of day-to-day work decisions could be made autonomously. But here’s the flip side: a 2026 trust study found that nearly two-thirds of respondents see security and risk concerns as the top barrier to scaling agentic AI, and 72% call cybersecurity a highly relevant risk. In short, the market is moving fast, and the guardrails are racing to catch up.

What is Agentic AI?

Agentic AI is an autonomous, goal-driven AI system that can plan, execute, and refine actions to achieve a result without constant human hand-holding. Traditional AI usually follows fixed rules. Generative AI usually creates content. Agentic AI goes a step further: it turns knowledge into action. It does not just tell you what to do; it can do the work within the limits you set.

A regular AI assistant may summarize an incident report. An agentic system can read the report, pull supporting logs, enrich indicators, determine likely severity, trigger the right workflow, and escalate only when human judgment is needed. That is why agentic AI feels less like a chatbot and more like a digital operator.

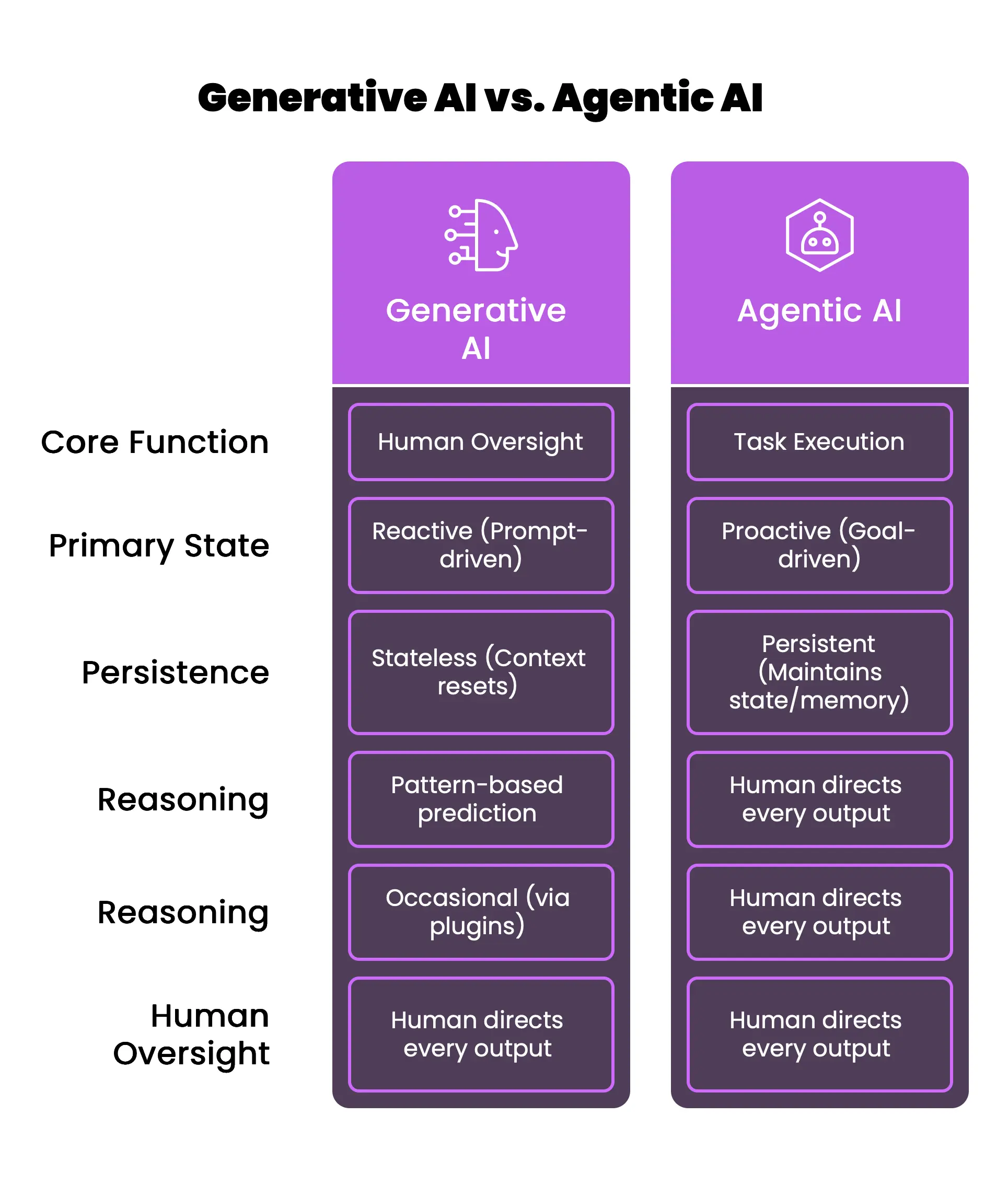

How is Agentic AI different from Generative AI?

While generative AI and agentic AI both utilize large language models, they represent fundamentally different paradigms of interaction and capability. Generative AI is a master of content creation, acting as a reactive partner that crafts text, images, or code based on patterns learned from training data. Agentic AI, however, is a proactive system designed for task execution and goal achievement.

1. Reactivity vs.Proactivity

The most significant difference is how the systems initiate work. Generative AI is reactive; it waits for a user prompt, provides a single-interaction response, and then returns to a state of rest. It is essentially a stateless tool where the context often resets after each interaction. Agentic AI is proactive; it is designed to pursue objectives that require a series of decisions and actions over time. It does not merely answer a question; it identifies the next steps and executes them autonomously.

2. Content Creation vs. Operational Execution

Generative AI is limited to the synthesis of information within the digital box of the chat interface. While it can draft a professional email, it cannot determine whether that email needs to be sent, find the recipient’s address, or schedule a follow-up meeting based on the response. Agentic AI bridges this gap. It acts as the operational layer that manages the work around the content. It can orchestrate external processes, coordinate multiple tools, and maintain continuity across days or weeks.

3. Single Inference vs. Iterative Reasoning Loops

From a technical standpoint, generative models typically perform inference once to generate an output. Agentic systems run inference loops repeatedly. This iterative nature allows the system to evaluate its own progress, detect errors in its planning, and recalibrate its approach if environmental conditions change.

The Architecture Behind Agentic AI

The architecture of Agentic AI is designed to transform a passive large language model (LLM) into a goal-oriented engine through a structured process of perception, reasoning, and execution. This architectural shift represents the move from “one-shot” responses to a continuous feedback loop that allows the system to learn from its actions and refine its strategies over time.

1. The Perception Module

The perception stage serves as the sensory system for the agentic entity. It involves the ingestion and interpretation of data from a wide variety of sources, including network traffic logs, database sensors, user interface inputs, and external API feeds. Through technologies such as natural language processing (NLP) and computer vision, the perception module builds a contextual awareness of the current environment. In a cybersecurity setting, this might involve scanning thousands of incoming emails for phishing indicators or monitoring behavioral baselines across millions of endpoints.

2. The Reasoning Engine and Cognitive Module

Often referred to as the “brain” of the system, the reasoning engine is typically powered by a large language model that coordinates specialized sub-models. This module is responsible for analyzing the perceived data, identifying the core objective, and formulating a plan of action. Unlike simpler systems, the reasoning engine can handle ambiguity; it uses logic and learned patterns to navigate complex scenarios and unexpected obstacles. To ensure high accuracy and relevance, many architectures incorporate Retrieval-Augmented Generation (RAG), which allows the model to access proprietary data and specific knowledge bases without retraining the underlying model.

3. Strategic Planning and Memory Systems

Once the reasoning engine identifies a goal, the planning module decomposes that objective into smaller, actionable sub-tasks. The system determines the most efficient sequence of steps and selects the appropriate tools for each task. This planning is supported by a dual-memory system:

- Short-term Memory: This component maintains the context of the current conversation or task, ensuring continuity across multiple steps in a single session.

- Long-term Memory: Often implemented using vector stores or knowledge graphs, this memory allows the system to retain historical data and learned behaviors, enabling it to improve its performance based on previous successes and failures.

4. The Action and Execution Layer

The final stage of the cycle is the action module, which translates high-level plans into concrete, real-world outcomes. This is achieved by interfacing with external software via APIs, writing and executing code, or triggering specific commands within an enterprise’s IT infrastructure. The system can autonomously log into systems, update records, or even isolate compromised devices, performing these tasks with minimal human intervention.

| Architectural Module | Primary Function | Key Characteristics |

| Perception | Environmental Awareness | Ingests data from sensors, logs, and APIs; builds context. |

| Reasoning | Decision Making | Breaks complex goals into sub-tasks; chooses execution path. |

| Planning | Task Decomposition | Breaks complex goals into sub-tasks; chooses execution path. |

| Memory | Context Retention | Tracks current session (short-term) and history (long-term). |

| Action | Execution | Performs API calls; interacts with external systems and tools. |

| Learning | Self-Optimization | Evaluates outcomes and refines future decision models. |

Practical Cybersecurity Use Case

1. Autonomous Threat Hunting and Anomaly Detection

Traditional threat hunting is a resource-heavy, reactive discipline that relies on human analysts to manually sift through enormous datasets to find the “needle in the haystack” of indicators of compromise (IOCs). This process is often hampered by alert fatigue and the sheer volume of logs generated by modern enterprise networks. Agentic AI transforms this by acting as a vigilant, 24/7 sentinel that proactively hunts for hidden patterns and deviations.

In this use case, an agentic system is deployed with the objective of maintaining the integrity of the corporate network. The system’s perception module continuously monitors network traffic, user login behaviors, and system logs across endpoints. Instead of waiting for a known malware signature to be detected, the agent uses behavioral analytics to establish a “normal” activity baseline for every user and entity.

If a specific employee account, which typically operates between 9 AM and 5 PM, suddenly initiates a multi-gigabyte data transfer to an unfamiliar external IP at 3 AM, the agentic system identifies this deviation. The reasoning engine then analyzes the context: Is this a scheduled backup? Has the user’s location changed? By correlating this with other data points, such as recent suspicious API calls or unusual browser fingerprints, the system can determine the probability of a credential compromise. Because the system has agency, it does not just flag this for a human; it can autonomously initiate an investigation, checking historical logs to see if similar patterns have occurred elsewhere in the network. This proactive stance allowed one health system to resolve over 74,000 alerts automatically, escalating only 174 for manual review and achieving a 110% improvement in detection coverage.

2. Intelligent Incident Response and Automated Remediation

In the event of a successful breach, the most critical metric for a security team is the speed of containment. Manual incident response involves a sequence of high-pressure steps: verifying the threat, identifying the scope, isolating affected systems, and eradicating the malicious presence. Agentic AI accelerates this process to machine speed, performing remediation actions in seconds that would traditionally take human analysts hours to coordinate.

Consider a scenario where the system detects the early stages of ransomware activity on a developer’s workstation. The agentic system’s reasoning engine recognizes the pattern of unauthorized file encryption and immediately classifies it as a critical threat. Working within predefined guardrails, the agent autonomously executes a series of containment actions: it isolates the workstation from the internal network to prevent lateral movement, terminates the malicious processes, and disables the compromised user’s cloud service credentials.

Furthermore, the system does not stop at containment. It can autonomously trigger “self-healing” protocols, such as initiating a system rollback from a clean backup or generating infrastructure-as-code templates to reconfigure firewalls across the entire enterprise. By the time a human analyst is alerted, the breach has been neutralized and the “blast radius” contained. This level of autonomous action has been shown to reduce response times by as much as 52%, allowing organizations to maintain operational continuity even under attack.

Conclusion

The transition to agentic AI is not about the replacement of human experts but the amplification of their capabilities. By 2028, it is projected that 15% of day-to-day business decisions will be made autonomously by agentic systems, a shift that will allow the human workforce to focus on high-value strategic initiatives rather than routine data processing.

The most valuable AI systems of the next decade will be those that maintain continuity and collaborate effectively with both humans and other agents. This requires a higher level of AI literacy across the organization, where employees understand how to interact with these systems, validate their reasoning, and intervene when necessary. In this new era, the focus of leadership shifts from managing tasks to managing outcomes and objectives.

CAIGS Training with InfosecTrain

Agentic AI is powerful, but without the right governance, it can introduce more risk than value. That’s why organizations are actively building expertise in AI governance, risk, and compliance before scaling autonomous systems. InfosecTrain’s Certified AI Governance Specialist (CAIGS) Training is designed to help professionals understand how to secure, control, and govern AI systems like Agentic AI, covering risk frameworks, regulatory alignment, and real-world implementation strategies. If you are working in cybersecurity, GRC, or AI-driven environments, this is the skillset that will define the next phase of your career.

TRAINING CALENDAR of Upcoming Batches For Certified AI Governance Specialist Training

| Start Date | End Date | Start - End Time | Batch Type | Training Mode | Batch Status | |

|---|---|---|---|---|---|---|

| 01-Jun-2026 | 02-Jul-2026 | 19:30 - 22:00 IST | Weekday | Online | [ Open ] |

Frequently Asked Questions

How does Agentic AI differ from Generative AI in business process automation?

Generative AI is focused on synthesis and drafting, while Agentic AI is focused on execution and outcomes, capable of independently managing multi-step workflows across different software platforms.

What is the architectural role of a Large Language Model in an AI agent?

The LLM acts as the central reasoning engine or "brain" that interprets goals, coordinates specialized sub-models, and plans the sequence of sub-tasks needed to achieve an objective.

What are the primary security risks of deploying autonomous AI agents in a SOC?

Key risks include memory poisoning of persistent state, goal manipulation by attackers, and the misuse of high-privileged tools or APIs through prompt injection or intent-breaking attacks.

How does Agentic AI improve incident response times compared to traditional security tools?

It reduces response times by automating the entire triage and remediation process, isolating hosts, disabling accounts, and blocking IPs in seconds, without waiting for human manual intervention.

What strategies can organizations use to optimize their content for AI answer engines (AEO)?

Focus on building data-backed topic clusters, using clear pillar pages supported by cluster content, and implementing semantic linking and schema markup to enhance authority and crawlability for AI agents.