How Do Data Privacy Laws Apply to AI?

Quick Insights:

AI-driven services must play by the same privacy rules as any tech, often even more strictly. Existing laws (like GDPR, CCPA/CPRA, and others) apply fully to AI systems that handle personal data. In practice, this means AI teams must treat data with privacy-by-design: map data flows, limit collection, run DPIAs, secure consent, and offer opt-outs. Regulators worldwide (from Europe’s DPAs to U.S. agencies) stress accountability and transparency; for example, GDPR forbids fully automated decisions without safeguards, and states like California now require notices for AI profiling. Key steps include auditing AI pipelines, minimizing data use, updating privacy policies, enforcing access controls, and preparing Impact Assessments.

Why Data Privacy and AI Are Colliding?

Artificial Intelligence is no longer a sci-fi novelty; it is baked into everyday applications and business systems. From chatbots that store conversations to algorithms that screen resumes, AI collects and learns from personal data. This “wild stallion” of technology brings enormous gains, but without careful controls, it can run wild into privacy landmines.

In fact, AI incidents are spiking: a 2024 Stanford report noted a 56% jump in AI-related data breaches and failures in just one year. Surveys show ~40% of companies have already had an AI privacy incident, and nearly 70% of people distrust how businesses handle AI with their data. Regulators have noticed: the Global Privacy Assembly recently underscored that all existing data protection laws apply to AI, and urged privacy-by-design in AI development. The takeaway? Privacy is not a “nice to have” for AI projects; it is a must. Build respect for privacy into your AI strategy from Day 1, or you risk not only fines (which can reach millions) but also lost customer trust and legal trouble.

Which Privacy Laws Cover AI?

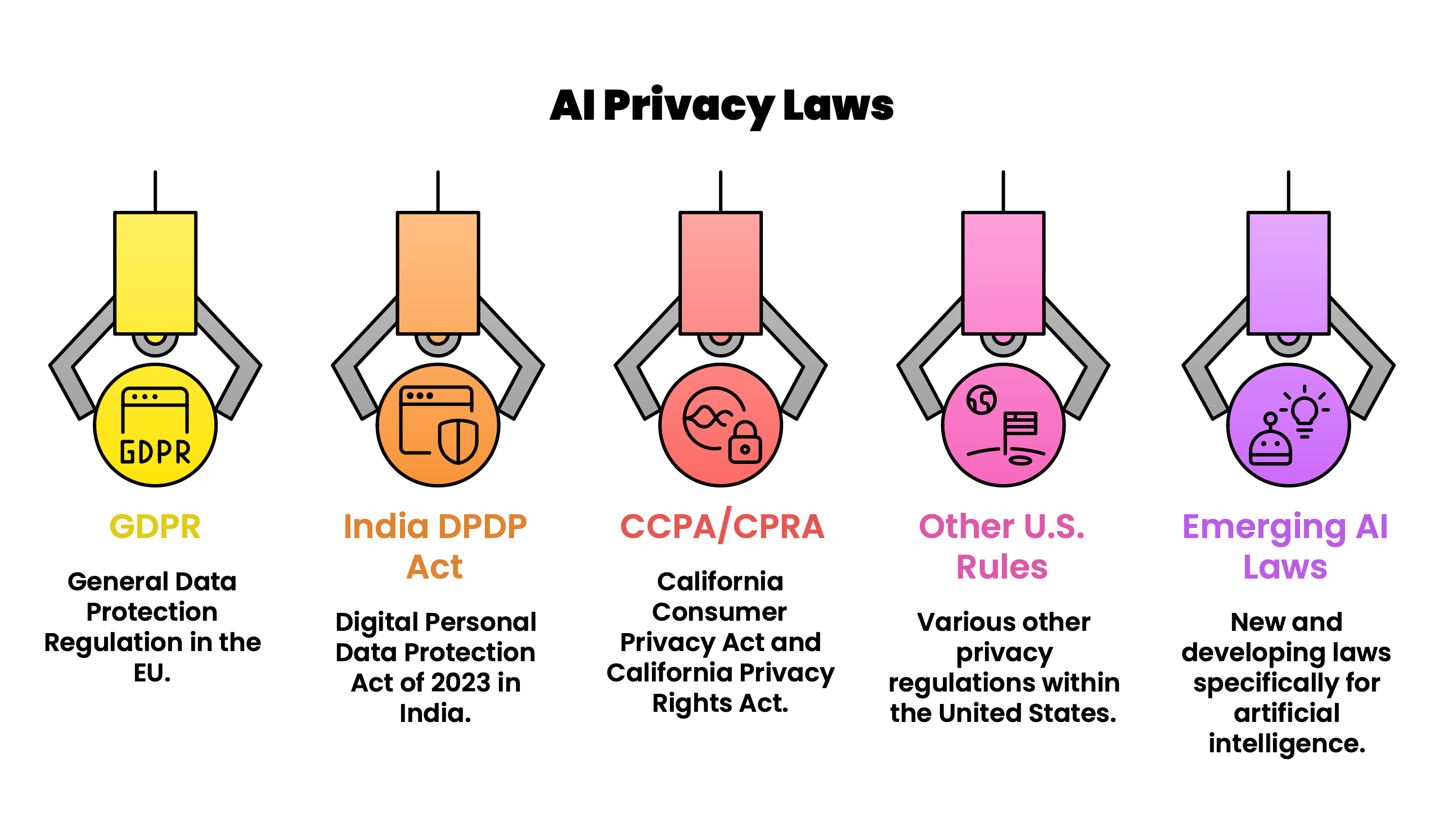

Global laws span many jurisdictions, but the big ones include the EU’s GDPR, U.S. state laws (like California’s CCPA/CPRA), Brazil’s LGPD, India’s new DPDP Act, and others. Here’s how they intersect with AI:

- GDPR (EU): Any AI that processes EU residents’ personal data falls under the GDPR. It mandates a legal basis (often consent or legitimate interest), data minimization, purpose limitation, and transparency. Crucially, Article 22 prohibits solely automated decision-making that has legal effects (like credit approval) unless safeguards (human review, consent) are in place. DPAs also stress Data Protection Impact Assessments for AI projects and require clear privacy notices explaining AI use.

- India DPDP Act (2023): India’s new privacy law (phased in by 2027) similarly emphasizes consent, purpose limitation, and accountability. Though it does not mention AI explicitly yet, any AI processing “digital personal data” in India must follow these principles. In practice, this means only use the data you have explicit permission for, limit how long you keep it, and appoint a responsible “Data Fiduciary” (like a DPO) to oversee it.

- CCPA/CPRA (California): California’s landmark privacy laws give residents rights to know, delete, and opt-out of the sale or profiling of their personal data. Recent updates (CPRA) explicitly cover automated decision-making and profiling. Businesses must inform users if “sensitive data” will be used for AI, and allow them to opt-out of automated profiling. The new California privacy regs (effective 2026) also impose risk assessments and auditing for AI systems used in “significant” decisions. Similar laws exist in Virginia (VCDPA) and Colorado, all emphasizing data minimization and consumer rights.

- Other U.S. Rules: There’s no national privacy law yet, but agencies enforce existing laws. The FTC has warned that using customer data for AI training “quietly” without notice can be deemed unfair or deceptive. Several federal agencies jointly confirmed that current civil rights, housing, and finance laws apply to AI-driven decisions just as they do to any practice.

- Emerging AI Laws: Beyond privacy laws, AI-specific regulations are on the horizon. The EU’s AI Act (effective mid-2020s) classifies AI systems by risk and requires strict safeguards (transparency, human oversight, bias mitigation) for “high-risk” use cases. States like Colorado, Texas, New York, and Utah have passed AI accountability laws focusing on discrimination and transparency. These often reinforce privacy principles (e.g., Texas bans high-impact AI-driven profiling without risk checks, and California will require disclosure when AI is used in decisions).

Key AI Privacy Challenges

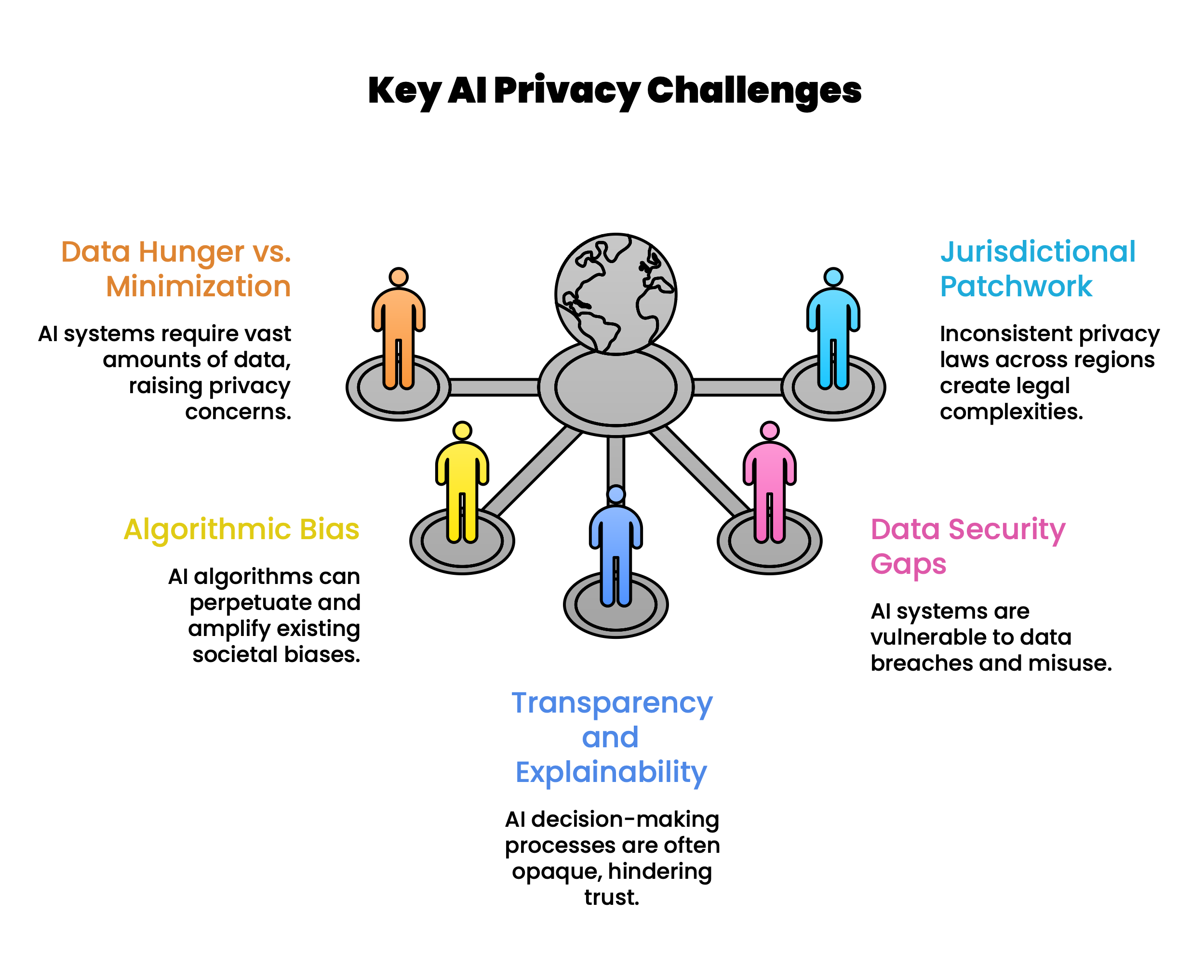

AI raises novel issues that can clash with privacy rules:

- Data Hunger vs. Minimization: Modern AI models often crave massive datasets, but privacy laws demand only “necessary” data. Over-collecting increases breach risk and violates principles of data minimization. Teams often hoard data “just in case” the model needs it, storing full profiles or sensitive fields that may never be needed. Compliance demands trimming datasets, using synthetic or anonymized data where possible, and deleting data once it is obsolete.

- Algorithmic Bias: AI can unintentionally learn societal biases from training data. Famous example: Amazon’s hiring AI trained on past resumes “learned” to downgrade women’s applications. Such hidden biases can lead to discrimination that breaches anti-discrimination and data laws. Privacy regulators are watching this closely: e.g., Colorado’s AI law is explicitly aimed at preventing algorithmic discrimination. The GDPR requires special care when processing sensitive categories (race, gender, health) and gives individuals the right to object to purely automated decisions. Mitigating bias means auditing your data and models, and keeping humans “in the loop” for consequential decisions.

- Transparency and Explainability: Unlike simple software, AI often lacks clear documentation of how it reaches a decision. This “black box” is a problem when laws require meaningful explanations. For example, GDPR demands that data subjects get meaningful information about the logic of automated decisions. Regulators from Europe to New Zealand say users should know when they are dealing with a machine (not a human). The FTC has warned against hiding AI use by sneaky policy changes. Practically, you may need to label AI outputs (“This response was generated by an AI”) and provide understandable summaries of how the model works.

- Data Security Gaps: AI systems can introduce new security weaknesses. For example, attackers might extract personal data through “model inversion” or poison training data. Unauthorized “Shadow AI” (employees secretly using public chatbots with internal data) can expose confidential information. These risks underscore the need for strong security controls (encryption, RBAC, continuous monitoring) around all AI data pipelines.

- Jurisdictional Patchwork: Global organizations face a collage of rules. One AI rollout might touch EU residents (GDPR), Indian citizens (DPDP), California users (CCPA), and beyond. These laws differ in definitions and requirements. For example, India’s DPDP Act has a narrower scope (digital data only, some consent exemptions), while U.S. states may focus on opt-outs and disclosures. Managing this patchwork is a major compliance headache.

Conclusion

In essence, AI is not a “legal loophole”; it is another way of using data, and thus must conform to privacy laws. Experts around the world, from Europe’s regulators to the U.S. Department of Justice, agree that existing privacy laws already cover AI-driven data use. They also demand that companies prove their AI is safe: whether through DPIAs, documentation, or ethical audits, you must demonstrate responsible handling of personal data.

Compliance is like an investment in trust. A well-governed AI pipeline reduces the chance of expensive breaches or fines (e.g., GDPR fines up to €36M) and avoids the PR nightmares of scandals. Instead, it lets your team innovate freely within a framework of accountability. Consumers notice this too: as one report warns, 80% of people expect companies to misuse their data in AI unless strong safeguards are in place. Meeting privacy standards can thus become a competitive advantage, not just a checkbox.

Ready to Bridge the Gap? Learn AI Governance That Actually Works

If you are serious about building AI systems that are not just powerful but compliant, ethical, and audit-ready, then it is time to level up with InfosecTrain’s AIGP (AI Governance Professional) Certification Training.

This is not theory-heavy content.

This is practical, real-world AI governance training designed for professionals who want to:

- Align AI systems with global privacy laws & frameworks

- Implement NIST AI RMF and ISO/IEC 42001 controls

- Conduct AI risk assessments and impact analysis

- Design privacy-first AI architectures

- Prepare for real audits, not just certifications

AI without governance is risky.

AI with governance is a competitive advantage.

Take the next step. Build AI systems that are trusted, compliant, and future-ready.

Explore InfosecTrain’s AIGP Training today and turn compliance into capability.

Click here to explore: How to Prepare for the AIGP Exam: Complete Guide (2026)

TRAINING CALENDAR of Upcoming Batches For AIGP Certification Training Course

| Start Date | End Date | Start - End Time | Batch Type | Training Mode | Batch Status | |

|---|---|---|---|---|---|---|

| 09-May-2026 | 24-May-2026 | 09:00 - 13:00 IST | Weekend | Online | [ Close ] | |

| 06-Jun-2026 | 21-Jun-2026 | 19:00 - 23:00 IST | Weekend | Online | [ Close ] | |

| 04-Jul-2026 | 19-Jul-2026 | 09:00 - 13:00 IST | Weekend | Online | [ Open ] | |

| 08-Aug-2026 | 23-Aug-2026 | 19:00 - 23:00 IST | Weekend | Online | [ Open ] | |

| 05-Sep-2026 | 20-Sep-2026 | 09:00 - 13:00 IST | Weekend | Online | [ Open ] | |

| 10-Oct-2026 | 25-Oct-2026 | 19:00 - 23:00 IST | Weekend | Online | [ Open ] | |

| 14-Nov-2026 | 29-Nov-2026 | 09:00 - 13:00 IST | Weekend | Online | [ Open ] | |

| 12-Dec-2026 | 27-Dec-2026 | 19:00 - 23:00 IST | Weekend | Online | [ Open ] |

Frequently Asked Questions

Do data privacy laws like GDPR apply to AI?

Absolutely. Any AI system processing personal data must follow standard privacy laws. For example, GDPR covers automated decisions; it generally bans fully automated profiling without consent and requires firms to explain and limit data use.

What is automated decision-making under privacy law?

This refers to decisions made by AI without human involvement (e.g., credit scoring). Under laws like GDPR, individuals have the right to human review or to opt out of such automated decisions. Businesses must assess risk and get explicit consent if AI makes consequential choices.

Do we need a DPIA for an AI project?

In most cases, yes. Privacy regulations (GDPR and some U.S. state laws) explicitly require a Data Protection Impact Assessment whenever an AI project poses a high risk (e.g., health data, profiling). A DPIA identifies privacy risks early and guides mitigation.

How can I make my AI system privacy-compliant?

Follow the privacy-by-design playbook: use only necessary data, keep it secure, obtain clear consent, update your privacy policy, and allow users to exercise rights (access, deletion) easily. Regularly review your AI outputs for bias or errors and document all procedures.

What if my AI vendor stores data outside my country?

Many privacy laws (like GDPR) limit cross-border data transfer. If an AI vendor stores personal data in another country, you must ensure adequate legal safeguards (e.g., EU Standard Contractual Clauses) are in place. It is your responsibility to verify compliance even for third-party AI services.