ISO 42001 Implementation Roadmap (Step-by-Step)

Quick Insights:

ISO 42001 gives organizations a practical way to govern AI before AI starts governing them. The smartest implementation path is simple: win leadership support, define a tight scope, inventory your AI systems, run a gap analysis, assess AI-specific risks, implement lifecycle controls, document evidence, and complete an internal audit before you ever invite a certification body in. If you already have a mature ISO 27001 program, the journey often moves faster because many management-system habits are already in place.

Why Does ISO 42001 Matter Now?

AI is not a side project anymore. It is business infrastructure. Stanford HAI reported that 78% of organizations used AI in 2024, up from 55% a year earlier, while global private investment in generative AI reached $33.9 billion. McKinsey & Company found that more than three-quarters of respondents now use AI in at least one business function, and that CEO oversight of AI governance is one of the strongest factors associated with stronger bottom-line impact. In other words, AI is scaling fast, and governance is no longer a legal afterthought. It is becoming a performance advantage.

The risk side is getting louder, too. IBM says ungoverned AI systems are more likely to be breached and more costly when they are. In its 2025 breach research, 63% of organizations lacked AI governance policies, and among organizations that reported an AI-related security incident, 97% lacked proper AI access controls. At the same time, the European Commission announced that the EU AI Act entered into force on August 1, 2024, adding more urgency to risk-based AI governance. That is exactly why ISO/IEC 42001 matters: the official ISO standard describes how to establish, implement, maintain, and continually improve an AI Management System, or AIMS, and positions itself as the world’s first AI management system standard.

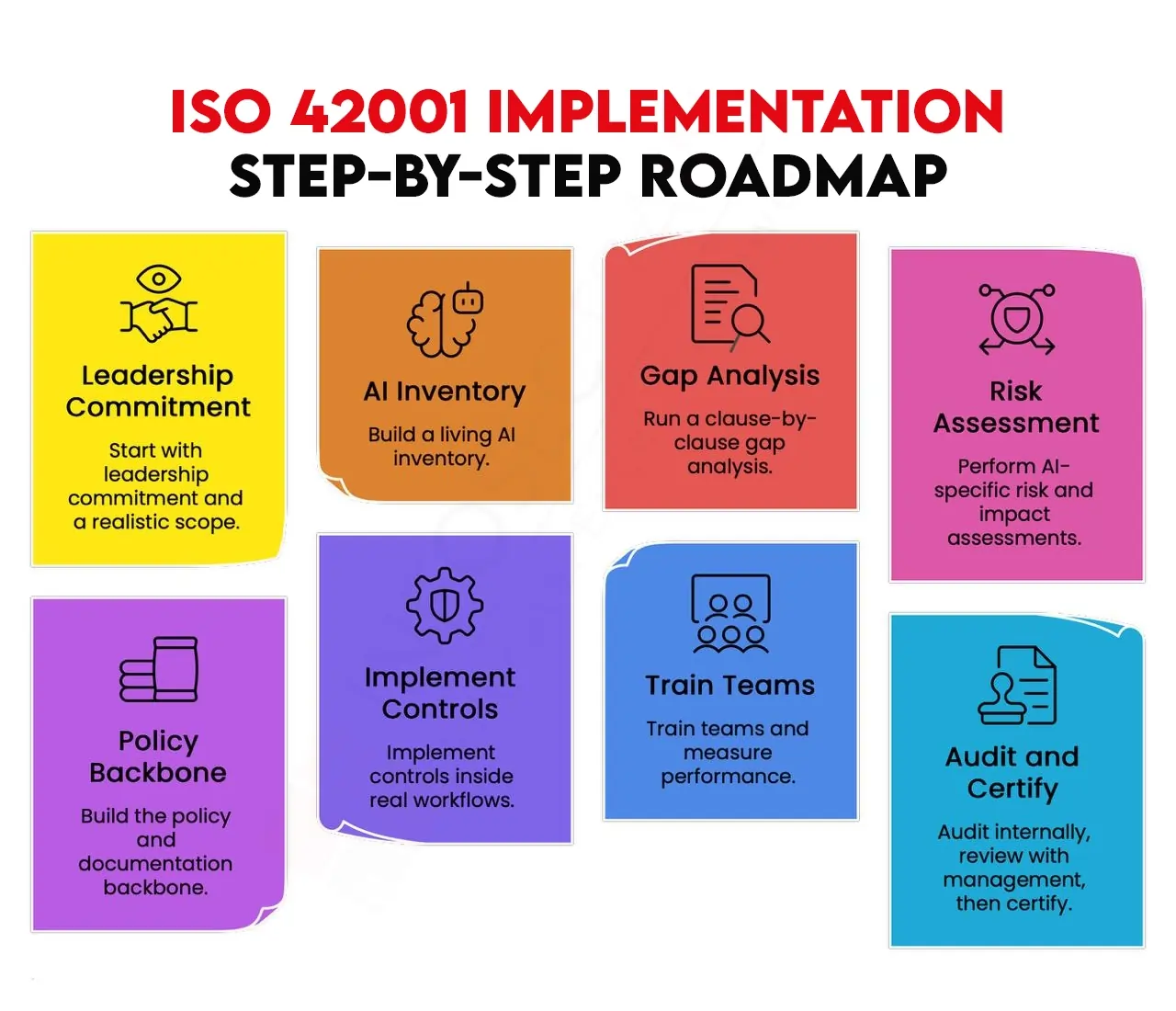

ISO 42001 Implementation Step-by-Step Roadmap

Below is the ISO 42001 implementation roadmap that actually works in the real world, especially for cybersecurity teams that need governance to be operational, not ornamental.

1. Start with leadership commitment and a realistic scope

Do not begin with controls. Begin with ownership. Multiple implementation guides recommend appointing an executive sponsor, a project lead, and a governance committee before anything else. Then define a focused AIMS scope based on business criticality, geography, customer obligations, or regulatory exposure. This is where many teams win or lose. If you try to govern every AI asset on day one, momentum dies. A tighter starting scope, such as a chatbot, credit-scoring model, or EU-facing AI workflow, is usually the better move.

2. Build a living AI inventory

You can not govern what you can not see. A real ISO 42001 program starts with a detailed inventory of in-scope AI systems, including owners, business purpose, model type, data sources, outputs, users, deployment scale, dependencies, and current governance gaps. The best guides are clear on one thing: this should be a living inventory, not a spreadsheet created the week before an audit. It becomes the foundation for scoping, risk ranking, accountability, and evidence collection.

3. Run a clause-by-clause gap analysis

Once the inventory is ready, compare your current state against ISO 42001 clauses and Annex A control objectives. Identify what already exists, what is partial, and what is missing. This is also the moment to map over anything reusable from ISO 27001 or ISO 9001. Experts notice that organizations with existing ISO 27001 structures can reuse a meaningful portion of governance processes and documentation, which is why implementation estimates often shrink from roughly 6 to 12 months from scratch to around 4 to 6 months for more mature organizations. That time savings is real, but only if the reuse is disciplined, not assumed.

4. Perform AI-specific risk and impact assessments

This is the heart of the standard. Traditional cyber risk reviews are not enough because AI introduces different failure modes. Your risk methodology should examine bias, fairness, transparency, explainability, human oversight, safety, privacy, data quality, security, and model behavior in context. For each in-scope system, decide whether the risk should be accepted, mitigated, transferred, or avoided. The National Institute of Standards and Technology AI RMF strengthened this step because it provides voluntary guidance around Govern, Map, Measure, and Manage, while ISO 42001 turns those ideas into auditable management-system practice.

5. Build the policy and documentation backbone

Now turn governance into paperwork that means something. That includes the AI policy, AI objectives, risk assessment methodology, risk treatment plan, impact assessment process, incident response procedures, operational procedures, and the Statement of Applicability. The SoA is especially important because it records which Annex A controls apply, which do not, and why. Good documentation is not busywork. It is how you prove governance, preserve consistency, and survive audit scrutiny without chaos.

6. Implement controls inside real workflows

This is where many organizations trip. If your controls live only in policy documents, you do not have an AIMS. You have a slide deck. ISO 42001 implementation becomes credible only when controls show up in data management, model validation, human oversight, explainability, monitoring, incident response, procurement, and lifecycle reviews. Just as important, the AIMS should plug into the workflows teams already use. Experts consistently warn against building a parallel governance universe that engineers, product teams, and security teams quietly ignore.

7. Train teams and measure performance

Awareness is not enough. Training needs to be role-based. Executives need clarity on accountability and management review. Data Scientists need expectations for validation, documentation, and drift monitoring. Internal Auditors need to know what evidence looks like. At the same time, define a short list of KPIs that tell you whether the system is working, such as the percentage of AI systems with documented risk assessments, training completion rates, number of audit findings, incident response time, and time to close nonconformities. If you can not measure it, you can not improve it.

8. Audit internally, review with management, then certify

Before External Auditors show up, audit yourself first. Experts stress that the first internal audit must happen before certification, and management review should then use reports and findings to make decisions on goals, budgets, and responsibilities. After corrective actions are closed, the external audit typically happens in two stages: Stage 1 reviews scope, documentation, and readiness, while Stage 2 tests whether the AIMS works in practice through interviews and evidence review. Many experts also note that certification is maintained through annual surveillance audits and a recertification cycle after three years.

One final warning: do not turn ISO 42001 into a documentation theater. Several experts call out the same mistakes repeatedly, treating the standard as paper compliance, under-scoping risky systems for convenience, and failing to integrate AI governance with existing security, risk, and data-governance processes. The organizations that get value from ISO 42001 are the ones that make it operational.

Conclusion

AI is moving fast. Governance has to move faster. For cybersecurity leaders, ISO 42001 is not just another framework to file away; it is the operating model for making AI safer, more explainable, and more defensible under scrutiny. The companies that win with AI will not just be the ones that build quickly. They will be the ones that can prove, under pressure, that their AI is governed, monitored, and improving. That is what a strong ISO 42001 roadmap delivers.

ISO 42001 Lead Auditor Training with InfosecTrain

If this roadmap gives clarity on how to implement ISO/IEC 42001, the next step is turning that knowledge into real-world capability. InfosecTrain’s ISO/IEC 42001 Lead Auditor Training is designed exactly for professionals who do not just want to understand AI governance, but want to audit, implement, and lead it confidently. This training goes beyond theory, helping you master AIMS controls, risk assessment, audit techniques, and compliance alignment with global frameworks. Whether you are a CISO, AI Governance Leader, or Compliance Professional, this is your opportunity to future-proof your career and become a trusted AI governance expert. Explore the program and take the next step toward becoming an ISO 42001-certified leader today.

TRAINING CALENDAR of Upcoming Batches For ISO/IEC 42001:2023 Lead Auditor Training

| Start Date | End Date | Start - End Time | Batch Type | Training Mode | Batch Status | |

|---|---|---|---|---|---|---|

| 13-Jun-2026 | 12-Jul-2026 | 09:00 - 13:00 IST | Weekend | Online | [ Open ] | |

| 08-Aug-2026 | 06-Sep-2026 | 19:00 - 23:00 IST | Weekend | Online | [ Open ] |

Frequently Asked Questions

What is ISO 42001, and who needs it?

ISO/IEC 42001 is the first AI management system standard, and it applies to organizations of any size that develop, provide, or use AI-based products or services.

How long does ISO 42001 implementation take?

Most practical guides estimate about 6 to 12 months from scratch, with faster timelines possible for smaller organizations or those that already run ISO 27001-style management systems.

What documents are required for ISO 42001 certification?

Common core documents include the AIMS scope statement, AI policy, objectives, risk assessment methodology, risk treatment plan, Statement of Applicability, impact assessment process, internal audit program, and corrective action records.

How is ISO 42001 different from the NIST AI RMF?

NIST AI RMF is voluntary guidance for managing trustworthy AI risks, while ISO 42001 provides auditable management-system requirements for doing that work systematically.

What are the biggest ISO 42001 implementation mistakes?

The biggest mistakes are over-scoping, treating governance as paperwork, and failing to embed controls into day-to-day engineering, risk, and audit processes.